Building an AI coding toolbox

that actually works

A year of lessons from coding agents, context curation, and a personal memory bank — tailored for Coodyans and friends.

Embedded systems · Web · AI workflows

A bit about me

One hour, not a monologue.

- ~40 minutes of talking: what’s happened, how I work, what actually works

- Interrupt whenever. This isn’t a stage lecture — it’s a dialogue

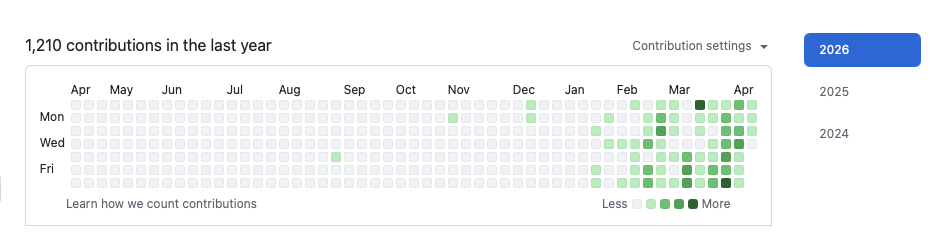

18 months — and a 36× leap in AI time horizon

METR, July 2025: −19 %

What the study found

- Experienced OSS developers on their own codebases

- They predicted: +24 % faster

- Reality: −19 % slower

- Large, mature projects → the agent has to read a lot of context

Why it’s not the end of the story

- The study measured Feb–Jun 2025. The Claude Sonnet 3.5 & 3.7 era

- Q4 2025 tooling has completely different context mechanics

- Stack Overflow 2025: trust fell 70 % → 60 %

- The lesson: generic “use AI” ≠ value. Workflow is what decides.

Model ≠ Harness

The model

- Claude Opus 4.6, GPT 5.4, Gemini 3.1

- A frozen file of 100–1000 GB

- Knows nothing about your project

- Knows nothing about your filesystem, terminal, or test suite

- Without a harness: a very knowledgeable buddy in a chat window

The harness

- Claude Code, Codex, Cursor, OpenCode

- Gives the model tools: read file, run command, search, edit

- Manages context, memory, agent loops

- Defines how MCP servers, skills, and subagents work

- Ongoing debate on where the value sits — models, harness, or both

One model, four surfaces

- Terminal (CLI) — claude, codex. Right inside your dev environment, sees git, files, processes. My primary surface.

- Desktop app — Claude Desktop, Cursor. Good when you want to chat with documents or kick off jobs in parallel.

- Cloud / web — claude.ai, chatgpt.com. Good for research, quick questions.

- Mobile — Claude iOS, ChatGPT. I mostly use it to log into my own memory system on the go.

- Pi / always-on — I also run an agent on a Raspberry Pi that does background work while I sleep.

From craft to factory

Craft (where we came from)

- One developer, one task, full attention

- The code is a personal expression

- “It takes the time it takes”

- Quality lives in the craftsman’s head

Factory (where we’re heading)

- The developer orchestrates multiple agents in parallel

- Quality lives in the process: tests, CI, context, loops

- Repetitive work becomes automation, not grind

- Time freed up for architecture, design, decisions

Why embedded has felt left behind

- Training-data skew. Ratio of public React : STM32L4 DMA examples ≈ 1000 : 1. The model is on thin ice.

- Hardware context. The LLM can’t see your wiring, your missing pull-up resistor, your actual logic analyzer.

- Toolchain lock-in. CubeIDE, Keil µVision, IAR. Your web colleagues have Cursor and Claude Code.

- NDA / IP / air-gap. Reference manuals can’t leave the network. ChatGPT is banned outright.

- Real-time, safety, resources. AI-generated code misses race conditions, timing deadlines, and is almost always too heavy.

The conversation has shifted

- Context engineering. A claude.md with target MCU, memory map, RTOS, critical never’s — a completely different output.

- Hardware-aware agents. Embedder (YC S25) ingests datasheets, refuses to generate code for registers not cited in the docs, runs air-gapped.

- MCP servers for hardware loops. Serial Console MCP, probe-rs MCP — the agent flashes, reads RTT, tests on real hardware.

- Chalmers / Software Center paper (Jan 2026): Swedish industry partners demanding MCP-compliant APIs on their tools.

- Beningo’s insight: ~20 % of firmware is register manipulation. The rest is state machines, protocols, business logic — that’s what AI is good at.

Context → skills → subagents

- CLAUDE.md / AGENTS.md — auto-loaded every session. 20–50 lines. Architecture, conventions, do’s and don’ts, where things live.

- Skills — reusable playbooks. I have 19: /commit, /close, /deploy, /debate-codex, /review-pr. Write once, use a hundred times.

- Subagents — model tiering per task. Haiku for grep, Sonnet for implementation, Opus for architecture. Protects root context when one task dumps 100k tokens of logs.

- MCP servers — the agent gets access to your APIs: Fortnox, Microsoft 365, Google Workspace, Playwright, your own memory system.

AI-ready architecture

- Docs as a runnable surface — not a PDF collecting dust. A README, an ARCHITECTURE.md, a CLAUDE.md that the agent actually reads.

- Self-describing APIs — clear names, good error messages, examples. For humans and for models alike.

- AI agent surface — MCP or CLI, one or the other. If an internal tool has no safe way for an agent to reach it today, it’s behind. Pick the surface that fits — but pick one.

- Bounded modules — small surfaces, clear contracts. Lets you hand off a slice of code to an agent without loading the whole project.

- Testability — a fast test suite, clear “it works” signals. Without it, the agent has no feedback loop.

Team CLI vs Team MCP

If you could only pick one abstraction to extend your agent’s abilities — which one do you take?

Make everything scriptable

- Example: Sagascript — a writing tool; the audience is a human using a GUI. I still build the CLI first, functionally complete.

- Why? Debug & test is 5× faster. The agent can run the CLI in loops. No click robot needed.

- Bonus: my own usage keeps migrating to the terminal. Just transcribed a call — I prompted Claude Code to transcribe the file using sagascript, and the result landed straight in the chat.

- Consequence: all my internal tools are CLI-first. noxctl for Fortnox. sagascript for voice-to-text transcription. Same surface, same habits — agent or me, no difference.

I actually prefer a clean terminal.

Claude Code in my terminal isn’t just a coding tool anymore. Email, calendar, files, transcription — and, since recently, noxctl for Fortnox. Same surface for writing code, reading mail, or booking an invoice.

Red/green TDD with the agent

- 1. Ask the agent to first write a failing test that describes what you want.

- 2. Run the test — verify it’s red.

- 3. Let the agent implement until the test goes green.

- 4. “Run the tests first” — four words that anchor every session to reality.

- 5. Bake it in. Put the literal line Use red/green TDD in your CLAUDE.md — one sentence, every session, no re-explaining.

Hoard the things you build yourself

- Every skill, tool, agent you write should produce data — not just perform a task.

- Logs → analytics → evidence of what works → improvements.

- Example: my /debate-codex skill logs which critiques Codex finds vs. what the model caught by itself.

- After 23 debates, 294 critiques: single-model self-review misses 77 % of what cross-model debate catches — two models reading the same text see different things.

The bigger thesis

- Karpathy’s autoresearch: autonomous agents run ML experiments overnight, measure val_bpb, keep or discard changes.

- Exactly 5 minutes training budget → directly comparable results.

- The human writes a markdown file that steers the exploration — not Python directly.

- Translates to your day job: hoard signals + agent loops + clear metric = self-improving system.

It’s not just code

- Regulation & compliance. EU AI Act, GDPR, DORA, CRA, NIS2. Feed the agent the regulation + your architecture → a gap analysis in an hour, not a week.

- Risk & threat modelling. STRIDE walkthroughs, dependency / supply-chain review, CVE triage. Two models disagreeing on the same system beats one human guessing.

- Documentation & knowledge. Architecture docs, runbooks, onboarding guides, handovers — generated from the code that actually runs, not from memory.

- Research & evaluation. Vendor comparisons, framework shortlists, competitor landscapes. A well-prompted afternoon replaces a consultant-week of desk research.

- Proposals, specs, client comms. RFP responses, tech specs, RFC drafts. Translate between engineer-speak and client-speak without losing precision.

Multi-agent orchestration

This is a very new area. Nobody has landed the pattern yet — and new model releases keep re-opening the question.

- Expert agents. One per domain — security, docs, tests, refactor. Cheap to write, hard to coordinate.

- Agent swarms. Many peers on the same task, debating or voting. Catches blind spots (see Hoard slide: 77 %). Expensive; fan-out is real.

- Coordinator + workers. A main agent plans, dispatches, integrates. Simple to reason about, bottlenecked by the planner’s context.

- Scaffolding & harnesses. Hand-built pipelines — explicit graph of who does what. More control, less magic, more maintenance.

- …or just wait. Every six months, a smarter single model solves in one shot what last quarter needed a swarm. Betting on orchestration can be betting against the model curve.

Resources

Simon Willison — Agentic Engineering Patterns

simonwillison.net/guides/agentic-engineering-patterns · read the whole thing, it’s worth it.

Karpathy — autoresearch

github.com/karpathy/autoresearch · the thesis on self-improving loops.

Beningo — Why Claude Code for Firmware Development Matters

beningo.com/why-claude-code-for-firmware-development-matters · the best embedded-specific piece right now.

Chalmers / Software Center — Agentic Pipelines in Embedded SW Engineering

arXiv 2601.10220 · Swedish industry partners, relevant to you.

METR, July 2025 — that −19 % study. Read it before you hype.

noxctl — my Fortnox CLI + MCP server: github.com/Magnus-Gille/noxctl.

All today’s material lands at coody.gille.ai (soon).

Thank you.

Questions, thoughts, things we didn’t get to — let’s hear them now.